Apple has pioneered smartphone and tablet accessibility features for years making its iPhone and iPad ideal for users with specific needs. iOS 14 and iPadOS 14 release is no different as Apple has continued to improve the accessibility capabilities of its devices in the upcoming iOS version.

Apple is as aware as anyone that features and apps for disabled people are very important. This year’s updates include features like Sound Recognition, Prominence feature support for sign language in FaceTime Group calls and Headphones Accommodation, all of which focus on people with speaking and hearing issues.

iOS 14 Accessibility Features

Let’s take a look at each of these features and see how they are going to help iPhone and iPad users worldwide.

Sound Recognition in iOS 14

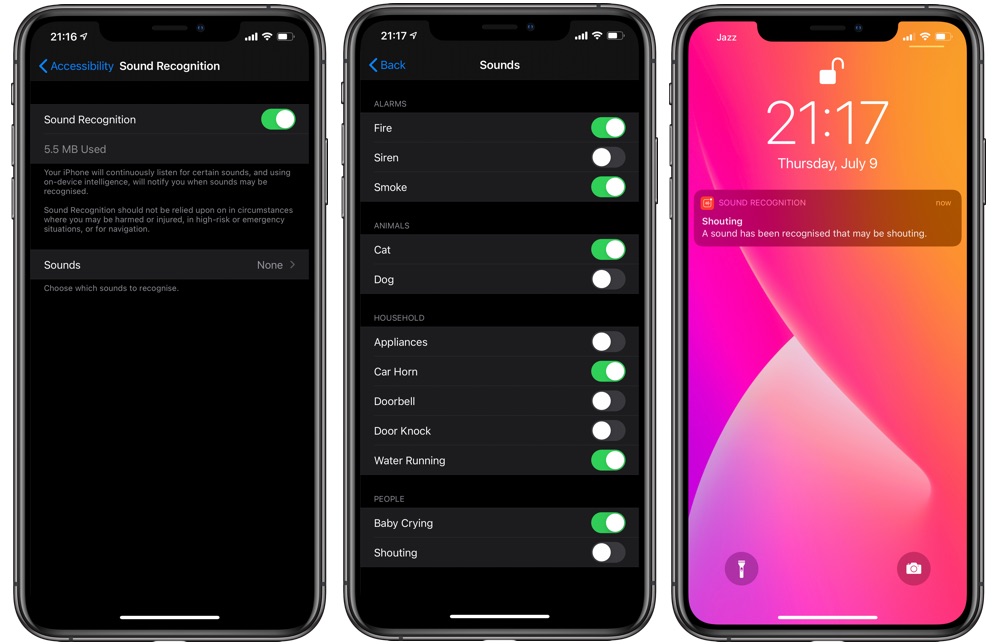

iOS 14 brings a new sound recognition feature to the iPhone, which makes the device listen for certain sounds using the on-device intelligence. If it detects a certain type of sound it will notify the user.

Sound Recognition is capable of recognizing a wide range of sounds including fire alarms, appliance bells, doorbells, smoke detectors, sirens, cats, dogs, water running, baby crying and humans shouting. When the iPhone detects any of theses sounds it will send a notification alert mentioning what kind of sound it has detected. If you are constantly getting an unnecessary notification for a sound you can also tell iPhone to stop sending alerts for that particular sound for the next 5 minutes, 30 minutes or 2 hours, which is very useful.

While the Sound Recognition feature is designed for users who have hearing loss it can also be useful for those who are always wearing headphones and want to stay aware of their surroundings.

Sound Recognition in iOS 14 can be enabled by going into Settings -> Accessibility -> Sound Recognition -> Toggle On. After activating the feature you can go to the Sound section from the same page and enable toggles for individual types of sounds that you want to use the feature for.

Sign Language Prominence in FaceTime Group Video Calls

If you have ever used FaceTime Group video call feature, then you would know that the FaceTime tiles resize based on who is currently talking, giving prominence to the person talking. With iOS 14 Apple has brought the prominence feature to those who use sign language to communicate.

Now iOS 14 will detect when a user starts signing and automatically make his or her time larger. This makes it easier for other people on the call to pay attention to the person who is using sign language.

The prominence feature for FaceTime will work when the FaceTime Automatic Prominence feature is enabled. To do enable it go to Settings -> FaceTime -> Speaking -> Turn On.

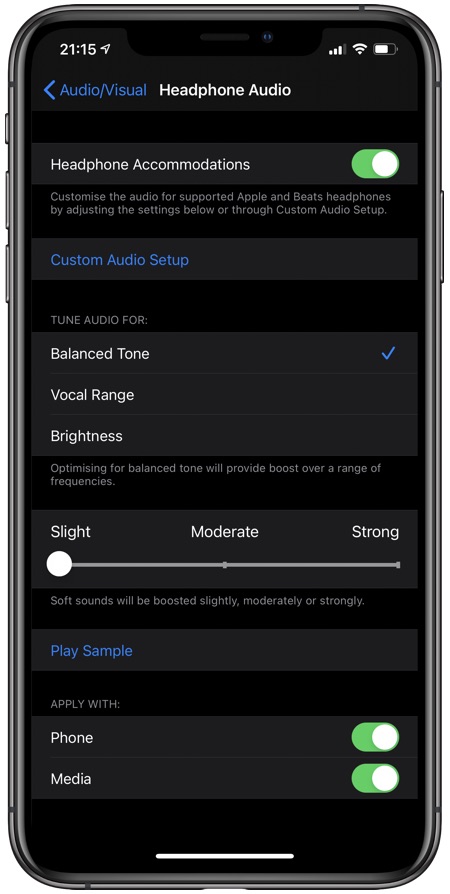

Headphone Accommodations

Another cool iOS 14 Accessibility feature is called Headphone Accommodations. This feature is designed to amplify soft sounds and adjust certain frequencies in order to make music, movies, calls, podcasts etc sound more crisp and clear.

You can go to Settings -> Accessibility -> Audio/Visual -> Headphone Accommodations to enable this feature. There you can choose various audio tune settings, select how much you want to boost soft sounds and more. On Headphone Accommodations settings you can also tap on Custom Audio Setup after which iPhone will guide you through the customization process.

You need supported headphones in order to use Headphone Accommodations feature. Most Apple headphones including AirPods, AirPods Pro, Beats headphones and EarPods are supported.

Back Tap Feature

Another really nice Accessibility feature introduced in iOS 14 is called Back Tap. This feature allows users to perform various actions by double or triple tapping on the back of their iPhone.

We have done a detailed article on iOS 14 Back Tap feature and a video hands on, which you can see here.

There you go folks, theses are some of the iOS 14 Accessibility features that will be making their way to your iPhone in the fall. What do you think about these features? Share your thoughts in the comments below.